Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

Contrary to popular belief, Lorem Ipsum is not simply random text. It has roots.

A data-driven digital marketing agency in Kolkata helps businesses achieve targeted and measurable online growth by offering services such as SEO, paid advertising management, conversion rate optimization, content marketing, and website design. These agencies develop marketing strategies tailored to the specific goals and needs of each business by analyzing real data and utilizing specialized tools.

Receiving a free audit from these agencies provides a comprehensive review of your SEO, content, social media, advertising, and website performance at no cost, clearly identifying paths for improvement and increased efficiency. Businesses such as startups, online stores, local service providers, and real estate agencies benefit the most from these services.

When choosing an agency, diversity of services, track record of success, transparency, strategy personalization, and industry experience are important factors. Data-driven agencies ensure your investment delivers optimal returns by providing ongoing reporting and setting key performance indicators, and, with a deep understanding of Kolkata’s local market, they offer strategies tailored to the city’s competitive environment.

Data migration consulting services, by providing expertise and professional guidance, minimize the risks associated with transferring organizational data to new systems. Consultants manage the data migration process securely and efficiently through thorough assessment of the current system, custom planning, ensuring data security and integrity, minimizing system downtime, and complying with legal requirements.

Utilizing modern tools such as Azure Data Factory and AWS Database Migration Service helps ensure error-free transfers and protects sensitive information. Data migration consulting is especially important for organizations with sensitive data, complex environments, or a need for minimal downtime, and helps prevent issues such as data loss, security breaches, unexpected costs, and non-compliance with regulations.

Choosing experienced consultants with deep industry knowledge and a successful track record guarantees the success of your data migration project and enables improvements in your organization's IT solutions. Consulting services are suitable for businesses of all sizes and ensure stability and future growth.

Data-driven marketing companies utilize data analysis and advanced tools to deliver targeted and measurable campaigns that improve return on investment (ROI). These companies monitor and report the performance of each campaign transparently by setting SMART goals, precise audience segmentation, message personalization, continuous optimization, and using modern analytical tools.

The advantages of this approach include increased conversion rates, reduced customer acquisition costs, enhanced marketing effectiveness, and the ability to make more accurate predictions. Challenges include the need for up-to-date technology, skilled analysts, and compliance with data privacy regulations.

When choosing a suitable company, attention should be paid to experience, transparency, the technologies used, and the ability to personalize solutions. The financial, retail, technology, and e-commerce industries benefit the most from this method.

Success in data-driven marketing requires setting clear objectives, deep audience understanding, continuous testing and optimization, and transparency in reporting so that businesses can maximize the return from their marketing budget.

Cloud Data Engineering with AWS enables organizations to process and analyze massive volumes of data quickly, cost-effectively, and securely. With its extensive global infrastructure, advanced data analytics tools, flexible pricing, and more than 200 specialized services, AWS offers a scalable solution for real-time data analytics and large-scale projects.

Key advantages include global scalability, robust security (encryption, access control, and compliance with standards), optimized cost, rapid innovation, and comprehensive support. Services such as Amazon S3, Redshift, Glue, Kinesis, and QuickSight provide solutions for storage, big data processing, ETL, and real-time analytics.

Selecting the right AWS provider should be based on expertise, security, cost management, a comprehensive service portfolio, and a proven track record.

Common applications of these solutions are found in sectors such as retail, healthcare, finance, manufacturing, and education, enabling large-scale data analytics, trend identification, and intelligent decision-making. With AWS cloud services, businesses can rapidly scale in line with their changing needs without the requirement for physical infrastructure.

Outsourcing data management is an effective strategy for reducing costs and increasing data quality in businesses. By entrusting complex data processes to external specialists, companies can save up to 40% in expenses and focus on their core objectives. This approach transforms fixed costs into variable ones and enables scalability as well as the use of the latest technologies. Outsourcing guarantees data quality through advanced tools, validation, and data cleansing, reducing the risk of errors. These services include data collection, entry, cleansing, enrichment, validation, data migration, and regular reporting. Various industries such as healthcare, finance, retail, and education benefit the most from these services. To manage risks such as information security and compliance, it is crucial to choose reputable partners with high security and contractual standards. Criteria such as expertise, technology, compliance, transparent reporting, and strong support are important in selecting a partner. In addition to reducing costs and increasing quality, outsourcing data management lessens the workload of the internal team and enables rapid growth.

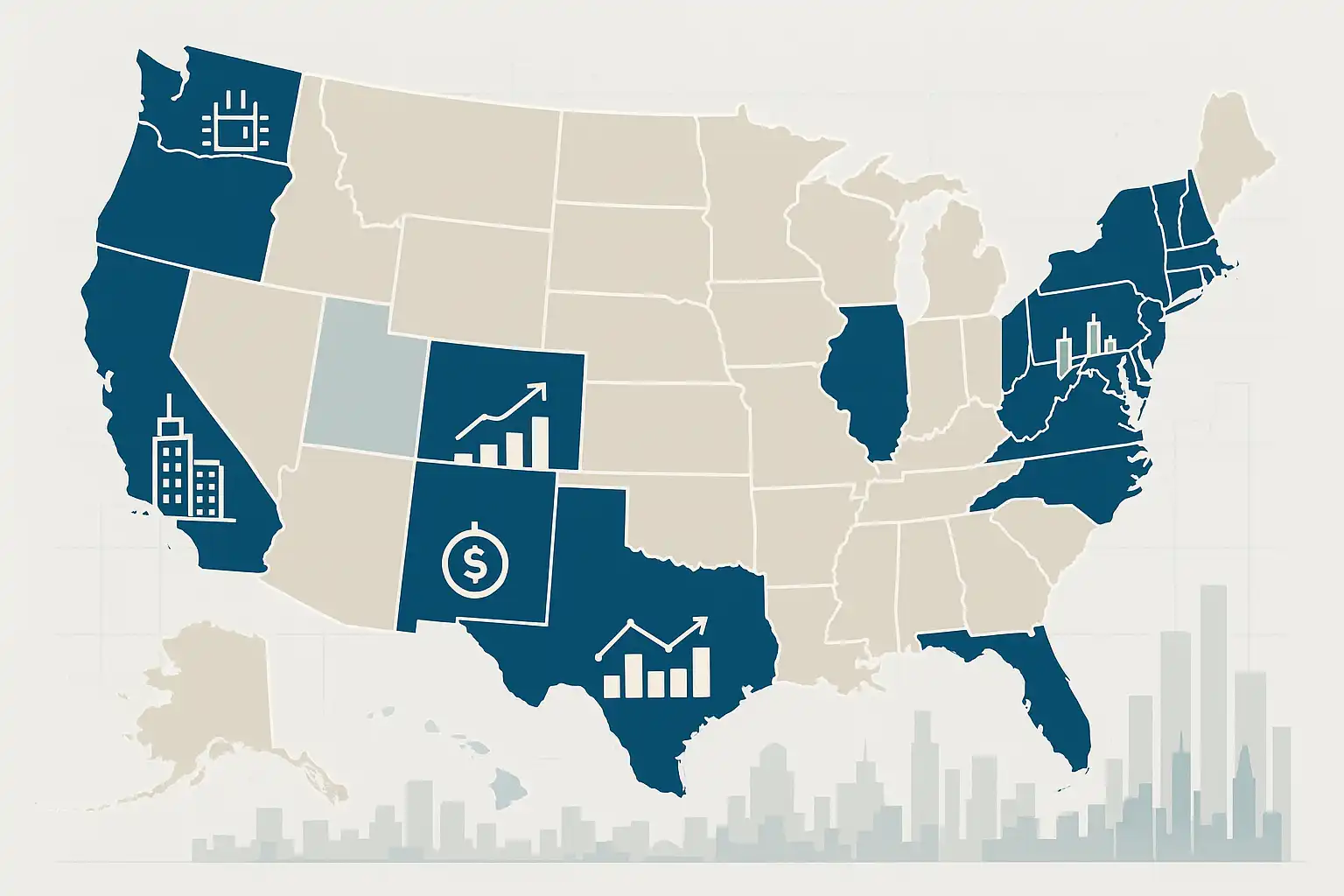

Salaries for Real-Time Data Services specialists vary significantly depending on experience, technical expertise, industry, and geographic location. In the United States, the average annual salary ranges from $110,000 to $135,000, while in the United Kingdom, it falls between £50,000 and £80,000. Cities such as San Francisco, London, and Frankfurt offer the highest pay levels.

Entry-level professionals typically earn between $70,000 and $95,000 per year, while experienced specialists can make up to $180,000 annually. Key factors influencing compensation include proficiency with tools such as Apache Kafka and AWS, years of experience, the specific industry (finance, healthcare, e-commerce), company size, and educational background.

These experts are responsible for designing and managing real-time data processing systems, optimizing data pipelines, and ensuring security and performance. The finance, healthcare, and technology sectors generally offer the highest salaries. Possessing recognized certifications (such as AWS Data Analytics or Google Data Engineer) and specialized technical skills can further boost earning potential.

The job market for real-time data professionals is expanding rapidly, offering strong career prospects and a promising future outlook.

Data mining consultants help organizations uncover hidden patterns and trends through advanced data analysis, enabling smarter decision-making, increased sales, optimized operations, and competitive advantage. These experts use techniques such as predictive analytics, customer segmentation, and pattern recognition to deliver practical and actionable solutions.

Industries including retail, finance, healthcare, manufacturing, and e-commerce benefit from data mining consulting services by improving customer experience, reducing risk, increasing revenue, and cutting costs. The workflow of data mining consultants typically involves assessing data quality, collecting and integrating information, conducting analyses, validating findings, and presenting clear, data-driven recommendations.

These services are valuable not only for large enterprises but also for small businesses, fostering sustainable growth and innovation. Leading data mining consultants—equipped with technical expertise, proficiency in tools like Python and R, and strong communication skills—translate complex data into simple, effective insights that drive business growth.

Customer behavior analysis in ServiceNow is a powerful tool for enhancing customer experience and increasing satisfaction. This process begins with collecting data related to service requests, communication channels, and customer satisfaction levels. The data is then categorized, patterns are identified, and dashboards and analytical tools within ServiceNow are used to uncover weaknesses and improvement opportunities.

Implementing customer behavior analysis helps identify common issues, improve response times, automate processes, and increase the efficiency of the support team. To succeed in this process, it is essential to define clear goals, gather comprehensive data, segment customers, use analytical tools, and take action based on the findings.

Ensuring data quality, maintaining privacy, preparing transparent reports, and performing regular updates are considered best practices. Both large and small organizations can leverage ServiceNow’s advanced capabilities—such as performance analytics, artificial intelligence, and automation—to transform the customer experience and achieve tangible results like higher satisfaction, reduced problem resolution times, and increased self-service growth.

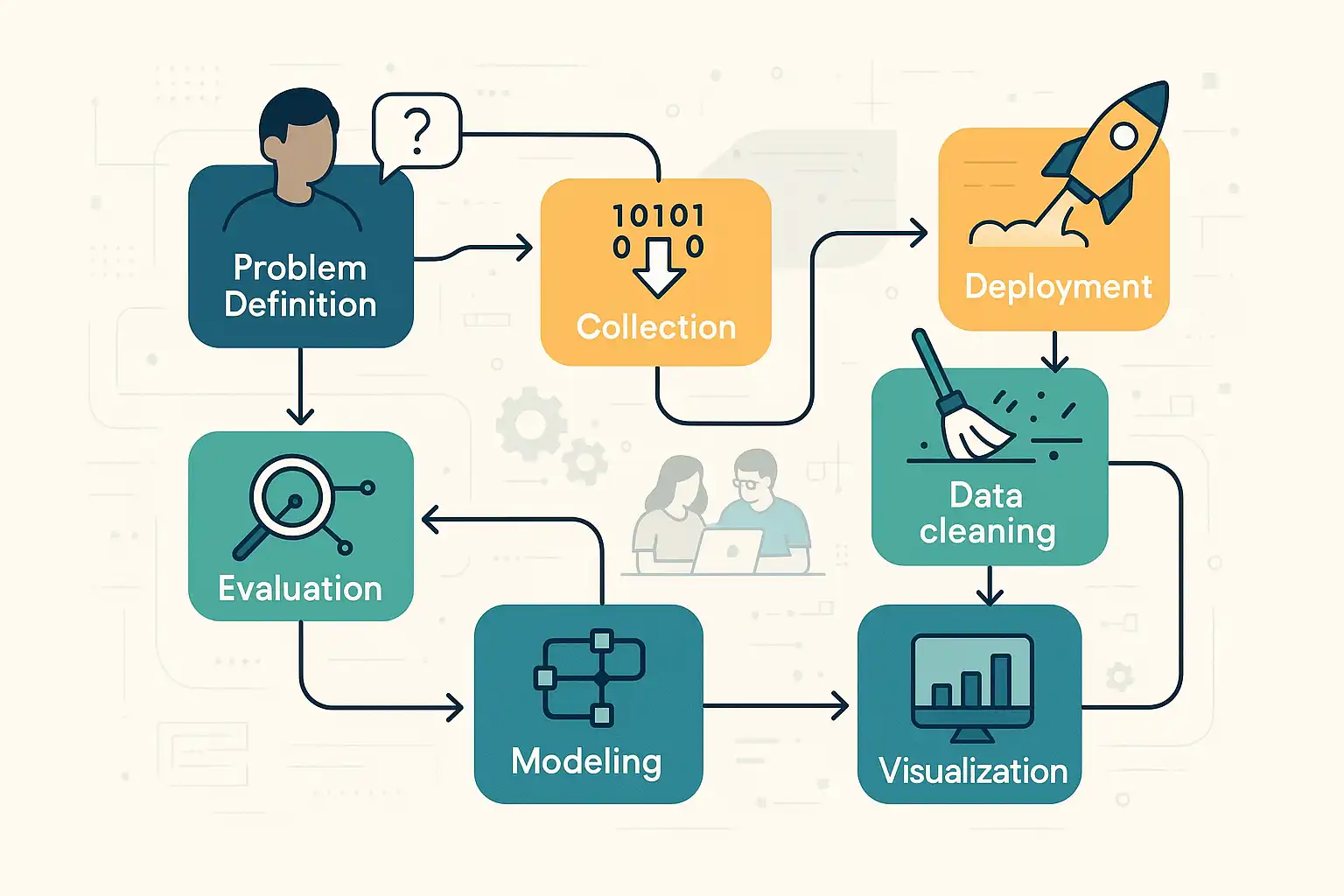

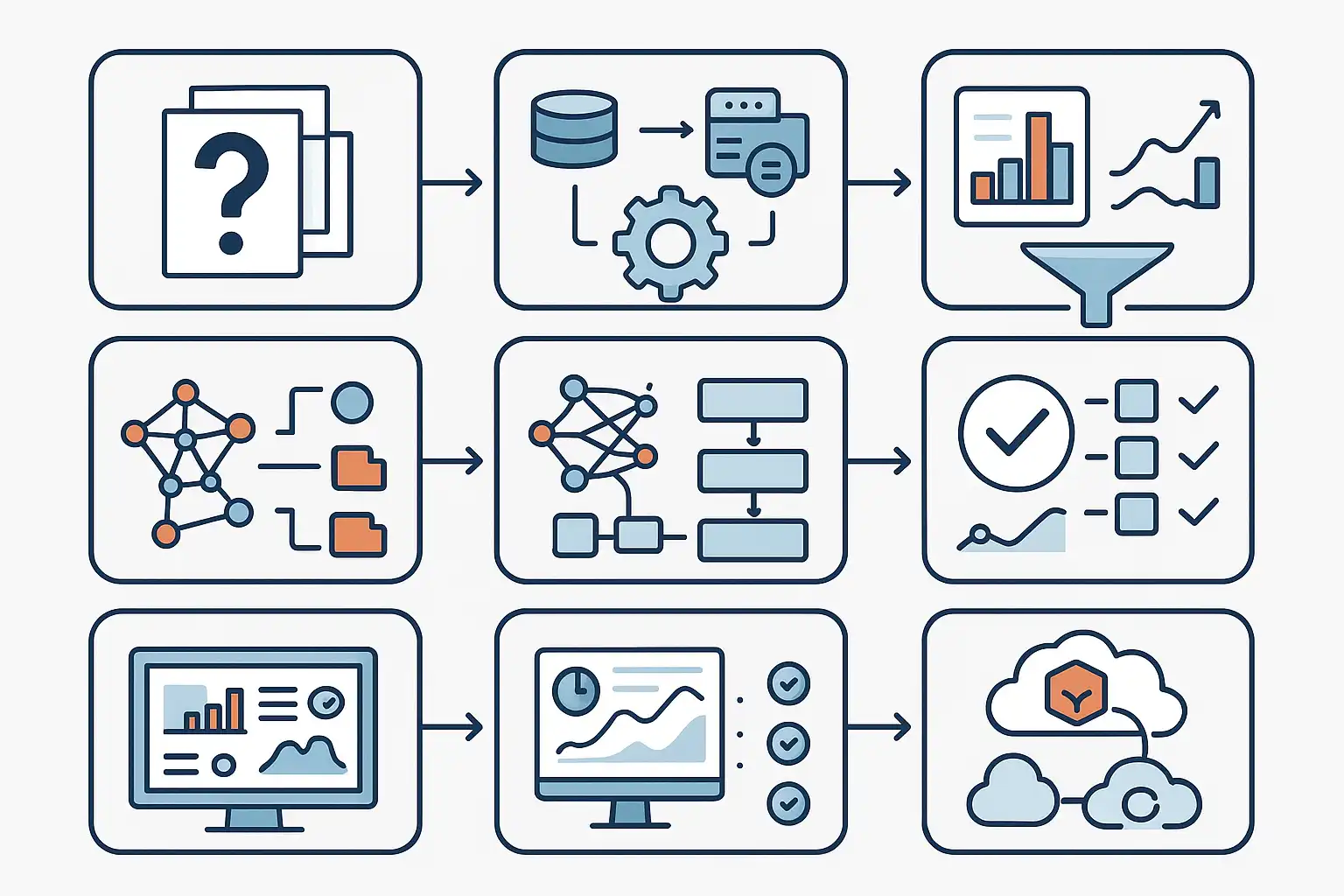

A data science workflow is a structured, step-by-step process guiding data projects from defining a problem to deploying solutions. Essential for organizing teams and ensuring repeatable, reliable results, it typically involves these phases: defining the problem, collecting data, cleaning/preparing data, exploring/analyzing, model building, evaluating results, communicating findings, and deployment/monitoring. Popular frameworks like CRISP-DM, OSEMN, and the Harvard Data Science Workflow provide templates, but many organizations customize workflows for their needs. Key tools for each stage include brainstorming and project management apps, SQL, Python, R, Jupyter Notebooks, machine learning libraries (scikit-learn, TensorFlow), and deployment platforms (MLflow, Kubeflow). Best practices—such as starting with clear objectives, thorough documentation, regular communication, validating assumptions, embracing iteration, and ongoing monitoring—ensure efficient, trustworthy outcomes. Choosing the right workflow depends on team expertise, project complexity, and stakeholder needs. Adapting frameworks with team input, piloting on small projects, and maintaining transparency maximize impact. While data pipelines automate data movement, workflows encompass the entire project life cycle. Following a robust data science workflow supports collaboration, minimizes errors, and helps new data scientists learn best practices.

Automated reporting tools streamline business decision-making by delivering real-time, accurate data and eliminating manual reporting tasks. These platforms integrate data from multiple sources, generate customizable dashboards, and offer advanced visualizations, enabling teams to access consistent, up-to-date insights instantly. Key features include robust data integration, scheduled report generation, mobile accessibility, and built-in analytics for forecasting. Popular solutions like Power BI, Tableau, Google Data Studio, Looker, and Zoho Analytics cater to diverse organizational needs. By automating repetitive tasks, these tools reduce human error, save time, and enhance collaboration—especially in remote or cross-functional teams. While initial setup and training may be required, and integration with legacy systems can pose challenges, the benefits of rapid, data-driven decision-making far outweigh the drawbacks. Automated reports foster transparency and unified action across departments, allowing teams to focus on strategy and problem-solving. For best results, organizations should clearly define reporting goals, regularly update dashboards, invest in user training, and ensure data quality. Automated reporting tools do not replace analysts but empower them to deliver deeper analysis, driving confident, agile business decisions.

A data mining specialist salary varies depending on experience, education, technical skills, industry, and location. Entry-level positions typically offer $55,000 to $75,000 annually, while mid-level roles range from $80,000 to $110,000. Senior specialists can earn $120,000 to $150,000 or more, especially in high-demand sectors like finance and tech. The national average is around $100,000 per year, with higher pay in major tech hubs such as San Francisco and New York. Key factors influencing salary include expertise in programming languages (Python, R, Java), database management (SQL, NoSQL), machine learning, data visualization (Tableau, Power BI), and relevant certifications. Industries such as finance, technology, and healthcare offer the most competitive salaries, while regional differences also play a significant role. Demand for data mining specialists remains strong, driven by the growing need for data-driven decision-making across sectors. Career advancement is enhanced by continual learning, networking, demonstrating business impact, and effective negotiation. While the field offers high earning potential and job security, professionals must adapt to evolving tools, manage complex datasets, and communicate insights clearly. Overall, data mining is a lucrative and expanding career path.

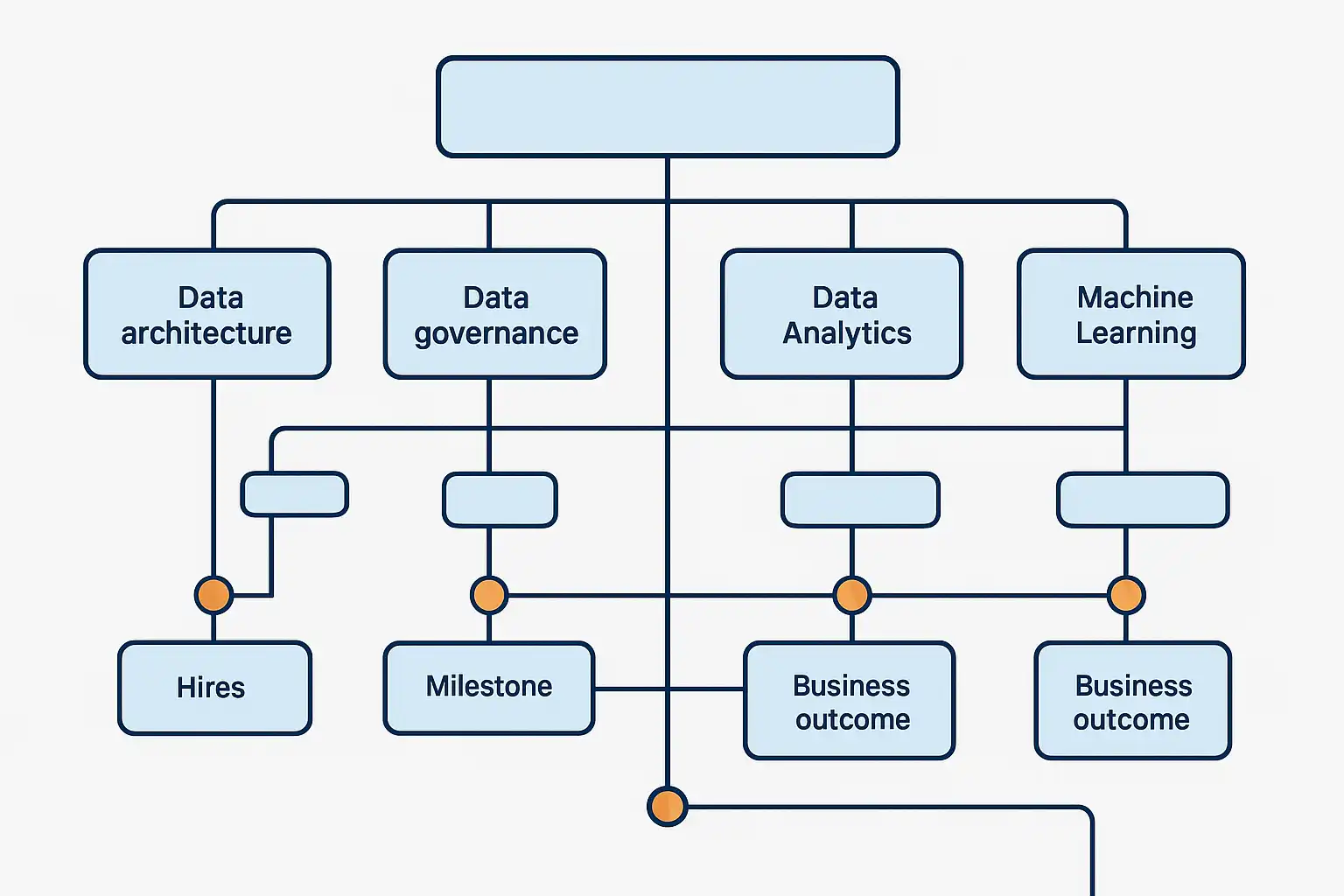

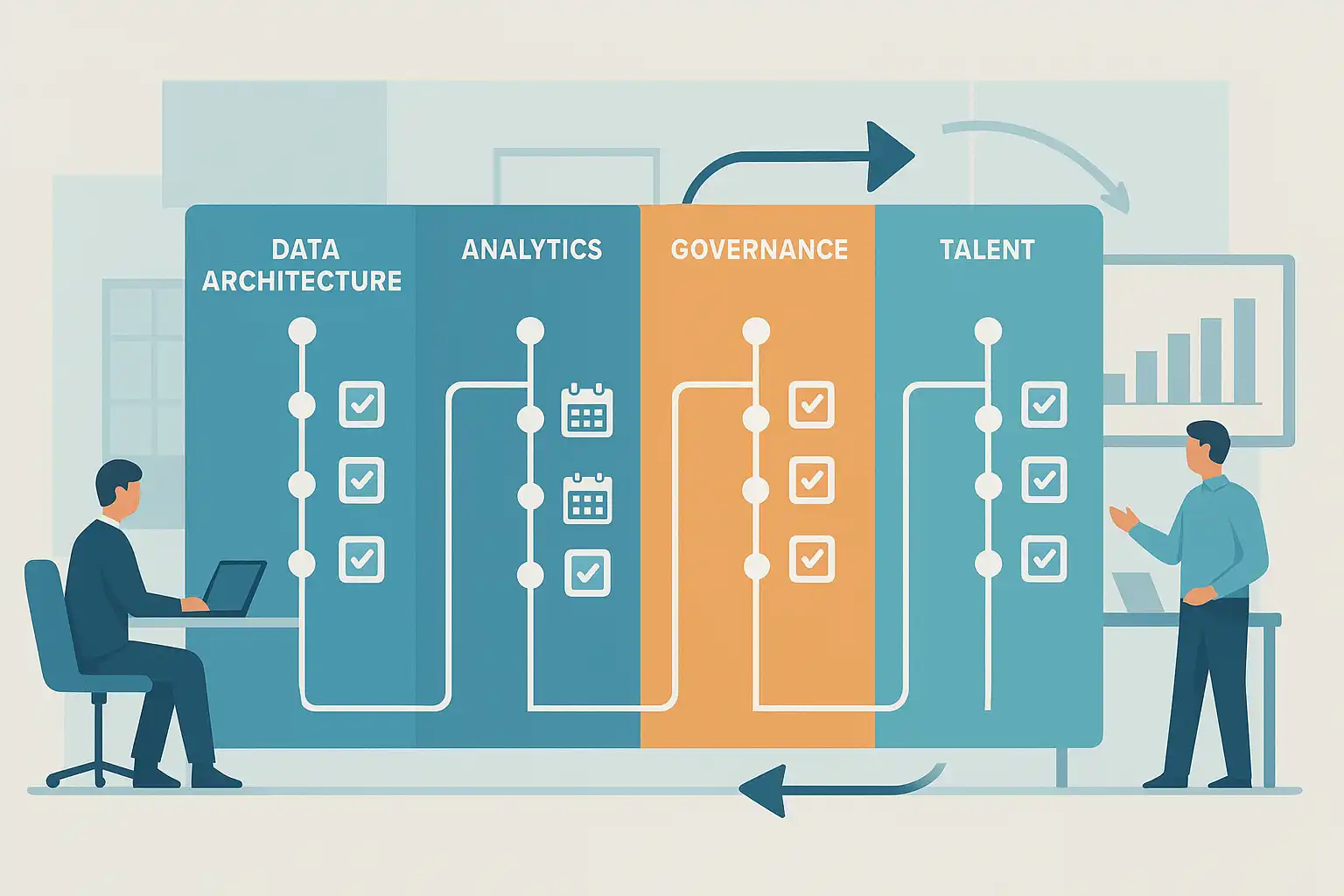

A robust data strategy and roadmap are essential for aligning analytics with business goals, ensuring that data initiatives drive real value rather than just generating reports. An effective data strategy connects business objectives with technology, skilled teams, and strong data governance. Key components include aligning analytics with organizational priorities, adopting a scalable modern data stack, establishing clear data ownership and privacy policies, nurturing a data-literate workforce, and maintaining a flexible, regularly updated roadmap. By linking analytics directly to business outcomes—such as customer retention or operational efficiency—organizations gain actionable insights, accelerate decision-making, and foster collaboration across departments. Building a future-ready analytics team requires clear roles, ongoing training, and strong partnerships with business units. Creating and maintaining a data strategy involves assessing current capabilities, engaging stakeholders, defining clear objectives, designing scalable architecture, prioritizing impactful projects, and continuously reviewing progress. Tools like cloud platforms, governance solutions, and BI tools support this process. Regular updates keep the strategy relevant amid evolving business needs and technology trends. Ultimately, a well-executed data strategy and roadmap empower organizations to turn data into meaningful business growth and smarter decisions.

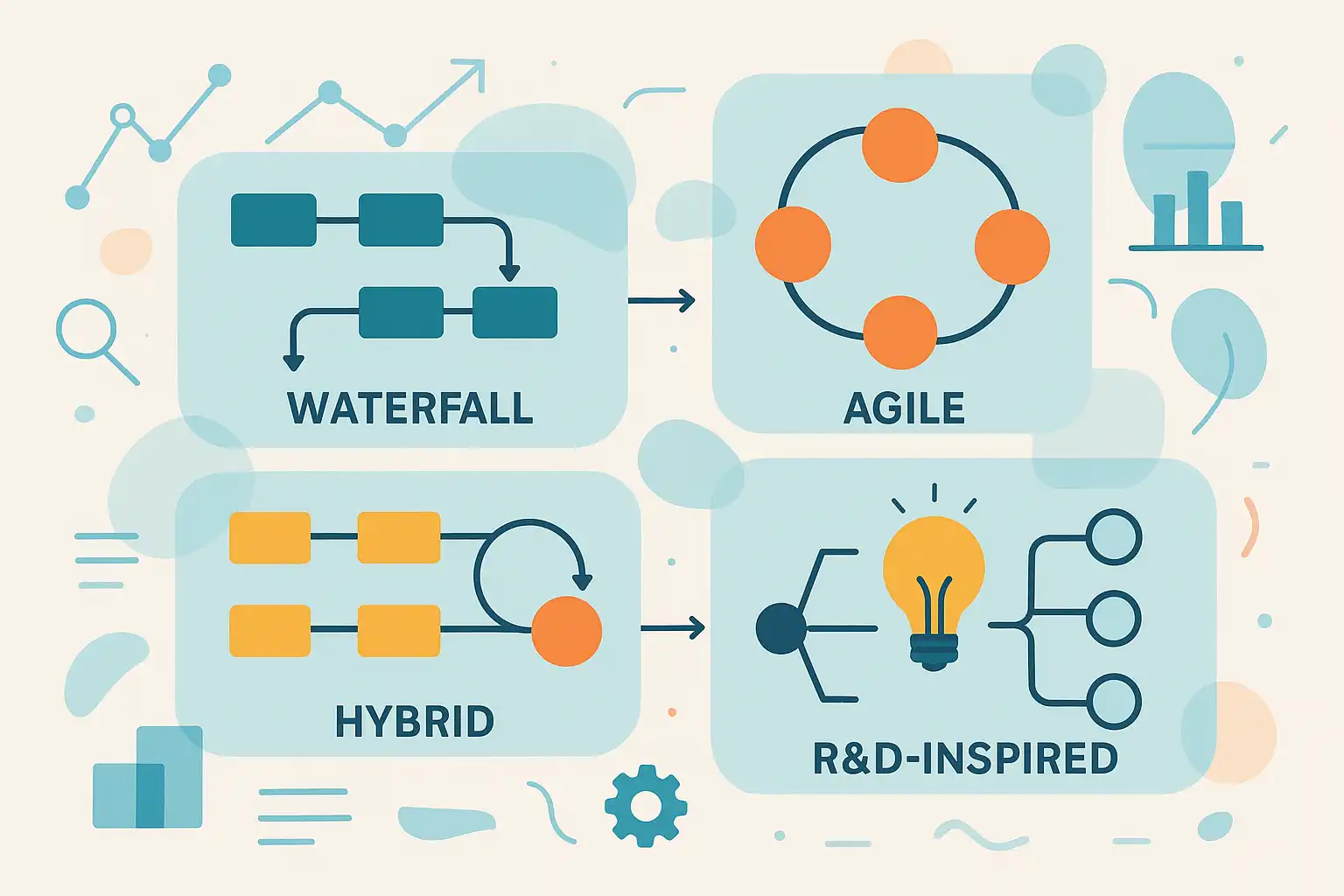

Custom AI software development services deliver tailored artificial intelligence solutions designed to meet the unique needs of businesses across various industries. Unlike off-the-shelf AI products, custom AI software is built to align precisely with your workflows, data, and strategic objectives, ensuring maximum efficiency, accuracy, and value. Key benefits include increased productivity through automation, improved decision-making via AI-driven analytics, cost savings, enhanced customer experiences, and accelerated innovation. Industries such as healthcare, retail, manufacturing, finance, and energy particularly benefit from these customized solutions. The development process involves thorough consultation, requirement analysis, solution design, model development, integration, and ongoing support. Leading technologies like machine learning frameworks, NLP, computer vision, and cloud platforms are used based on project needs. When choosing a provider, consider their industry experience, end-to-end service capabilities, development methodology, commitment to ethical AI, and client references. Challenges such as data quality, privacy, change management, and cost can be overcome with careful planning and the right partner. Ultimately, custom AI solutions empower businesses of all sizes to innovate, streamline operations, and maintain a competitive edge in today’s digital landscape.

AI powered business services are revolutionizing business operations by automating repetitive tasks, enhancing data analysis, and enabling faster, smarter decision-making. Leveraging advanced technologies like machine learning, deep learning, and neural networks, these services streamline workflows, reduce human error, and free up employees for strategic work. Key features include automated customer service, predictive analytics, personalized marketing, cybersecurity, and efficient inventory management. Popular tools include conversational AI platforms, business intelligence solutions, generative AI for content creation, and predictive analytics engines. Adopting AI leads to tangible benefits such as cost reduction, improved operational efficiency, enhanced customer satisfaction, better risk management, and increased innovation. To get started, businesses should identify high-impact areas, pilot solutions, train staff, and ensure data quality. Best practices include promoting AI literacy, choosing scalable tools, and aligning AI strategies with overall business goals. Address challenges like data privacy and ethical usage by implementing strong protections and continuous staff training. AI powered business services are accessible to companies of all sizes, offering measurable gains in efficiency, accuracy, and growth while maintaining robust security and compliance standards.

Cloud based video analytics services leverage AI and machine learning to deliver smarter, real-time monitoring without the need for onsite hardware. By processing video streams securely in the cloud, these solutions offer organizations scalability, cost efficiency, remote access, and automatic updates. Key features include real-time alerts, object and facial recognition, license plate detection, heat mapping, and seamless integration with other security systems. Common use cases span retail analytics, city surveillance, workplace safety, healthcare monitoring, and educational facility security. Leading providers such as Google Cloud, Microsoft Azure, Amazon Rekognition, Cisco Meraki, and Genetec offer robust solutions with strong encryption, access controls, and compliance with privacy regulations like GDPR and HIPAA. While cloud video analytics reduce hardware costs and offer flexible, subscription-based pricing, organizations should consider bandwidth, privacy requirements, long-term costs, and potential vendor lock-in. To maximize benefits, assess your existing infrastructure, choose the right provider, establish privacy policies, and train staff accordingly. Cloud video analytics empower businesses to enhance security, gain actionable insights, and optimize operations, making them an ideal choice for modern, data-driven organizations.

Customer behavior analysis in the service industry is vital for understanding what drives customer choices and enhancing services across sectors like hospitality, healthcare, and retail. By leveraging both quantitative methods (such as purchase history and web analytics) and qualitative techniques (like interviews and feedback surveys), businesses gain actionable insights to personalize offerings, streamline operations, and boost satisfaction. Key trends shaping this field include real-time feedback, predictive analytics, omnichannel data integration, and a growing focus on data privacy. Best practices involve mapping the customer journey, responding promptly to feedback, personalizing experiences, aligning cross-functional teams, and measuring success through satisfaction and retention metrics. Tools such as CRM platforms, analytics software, and sentiment analysis solutions help organizations of all sizes turn data into meaningful improvements. Overcoming challenges like data quality, privacy, and resource constraints ensures even small businesses can benefit. Regular customer behavior analysis enables companies to anticipate needs, reduce churn, and increase revenue, providing a crucial competitive edge in today’s dynamic service environment.

An automated reporting system streamlines data collection, analysis, and reporting, enabling businesses to access accurate, timely insights with minimal manual effort. By integrating with various platforms and automating repetitive tasks, these systems minimize human error, ensure data consistency, and deliver real-time information essential for agile decision-making. Key benefits include increased accuracy, speed, resource savings, and the ability to customize reports for specific business needs. Leading tools like Power BI, Tableau, Google Data Studio, and Zoho Analytics offer scalable, user-friendly solutions with robust integration and security features. Automated reporting empowers organizations across finance, marketing, sales, and operations to spot trends, respond swiftly to market changes, and drive growth. Best practices for implementation include setting clear goals, involving key users, ensuring data quality, and providing staff training. While challenges such as complex setup, data quality issues, and user resistance may arise, regular audits and tailored solutions can mitigate risks. Automated reporting systems—whether scheduled, on-demand, or alert-based—are vital for modern businesses seeking to enhance decision-making, boost efficiency, and stay competitive in a data-driven landscape.

Industry-specific data science challenges are critical hurdles for organizations aiming to leverage data for strategic advantage. Key obstacles include integrating data from diverse sources, a shortage of skilled data scientists, ensuring data privacy and regulatory compliance, cleaning and preparing messy datasets, and effectively communicating insights to non-technical stakeholders. Sectors such as healthcare, finance, retail, and manufacturing face unique issues—like patient privacy, fraud detection, omni-channel analysis, and IoT integration—requiring tailored solutions. To overcome these challenges, businesses should adopt robust data integration tools, invest in ongoing staff training, implement strong data governance and security measures, automate data cleansing, and prioritize clear, visual communication of results. Customizing approaches for each industry, fostering collaboration between technical and business teams, and staying current with regulations are essential for successful data-driven strategies. Both large enterprises and small businesses can benefit from applying these best practices, enabling more efficient operations and better decision-making.

Start your data science career with a free online bootcamp—no experience or financial investment required. Free online data science bootcamps offer structured, intensive training on foundational topics such as Python, Excel, SQL, statistics, data analysis, and real-world project work. Leading platforms like Correlation One’s Data Science For All (DS4A), Fullstack Academy’s Data Analyst Accelerator, and Hack the Hood provide immersive learning, mentorship, and career support, often focusing on underrepresented groups or specific regions. Most bootcamps are remote, flexible, and designed for beginners, students, and career changers. To get started, define your goals, research eligibility, prepare basic skills, apply thoughtfully, and engage with mentors and peers. Build a strong portfolio through hands-on projects and leverage career services to enhance job prospects. While free bootcamps may have competitive entry and cover mainly fundamentals, they deliver job-ready skills for entry-level roles in analytics and data science. With commitment and continued self-study, graduates can secure interviews, internships, or junior positions. Certificates may be offered, but practical projects and networking matter most to employers. Start learning data science online for free and unlock new career opportunities today.

Time series forecasting is a specialized data analysis technique that predicts future values based on historical, time-ordered data. Unlike traditional prediction models that assume data points are independent, time series forecasting accounts for temporal patterns such as trends, seasonality, and cycles—making it essential for applications like financial market analysis, retail sales predictions, energy demand planning, weather forecasting, healthcare resource management, and supply chain optimization. Key forecasting models include ARIMA, Exponential Smoothing, Facebook Prophet, and LSTM neural networks, each suited for different data characteristics. The forecasting process involves data exploration, cleaning, feature engineering (like adding lagged variables), model selection, validation using time-series-specific metrics (RMSE, MAPE), and ongoing model refinement. Popular tools include Microsoft Excel, Python libraries (pandas, statsmodels, Prophet), R packages, and cloud platforms like AWS Forecast and Google Cloud AI. Benefits of time series forecasting include improved planning, resource optimization, and proactive trend identification. Challenges involve ensuring data quality, model selection, and adapting to unexpected events. Best practices emphasize thorough visualization, careful handling of missing data, maintaining time order during validation, and regularly updating models. Effective time series forecasting enables businesses to gain actionable insights, drive automated reporting, and make data-driven decisions.

Data conversion services are essential for organizations aiming to migrate, upgrade, or consolidate their data across new systems, platforms, or software. These services go beyond simple data transfer—they clean, deduplicate, standardize, and transform data for compatibility, accuracy, and immediate usability. Key industries like healthcare, finance, retail, technology, and manufacturing benefit most from professional data conversion, as it ensures regulatory compliance, protects sensitive information, and supports seamless operations. The typical process involves data assessment, preparation, mapping, transformation, verification, and finalization, ensuring high data quality and reduced business risk. Techniques vary for structured and unstructured data, utilizing tools like ETL software, OCR, and NLP for optimal results. Data cleaning is a critical step, boosting accuracy, efficiency, and compliance while minimizing post-migration issues. Professional services offer advantages such as expert oversight and reduced downtime but may involve additional costs and require collaboration. Best practices include thorough planning, early data validation, clear documentation, and stakeholder communication. Data conversion is vital for successful data migration, especially during legacy system upgrades, and ensures data security through encryption and strict protocols, supporting modern business needs with reliable, organized data.

Healthcare data solutions in fabric are revolutionizing patient care by integrating and harmonizing data from multiple sources such as EHRs, imaging, labs, and social determinants of health. By breaking down data silos, these platforms enable unified, real-time access to patient information, supporting faster, safer, and more personalized medical decisions. Key technologies like FHIR, OMOP, and DICOM ensure data interoperability, while AI and advanced analytics deliver actionable insights, automate diagnostics, and streamline care delivery. Fabric-based solutions enhance operational efficiency, lower costs, and support compliance with privacy laws like HIPAA and GDPR. Leading platforms, including Microsoft Fabric and Azure Health Data Services, empower healthcare organizations to move past legacy systems and manual processes, enabling proactive risk identification, tailored treatments, and advanced research capabilities. Ultimately, healthcare data fabric fosters a modern, secure, and flexible environment, driving innovation and improving patient outcomes across hospitals, clinics, and research centers.

The data science team augmentation process enables organizations to accelerate project delivery and improve flexibility by temporarily adding external data experts to their in-house teams. This approach allows businesses to quickly bridge skill gaps, scale resources for urgent or specialized tasks, and access advanced expertise in machine learning, analytics, and engineering without long-term hiring commitments. Key benefits include rapid onboarding, reduced recruitment cycles, improved team morale, and exposure to the latest tools and best practices. Successful team augmentation relies on clear role definitions, strong communication, robust project management tools (like Jira, Trello, Slack), and thorough onboarding. Leading brands such as IBM and Deloitte utilize this model to drive digital transformation. While it offers quick, scalable talent and cost-effectiveness for short-term projects, challenges include integration, data confidentiality, and potential limits to organizational learning. Measuring success involves tracking project delivery times, quality, cost savings, and knowledge transfer. Suitable for organizations of all sizes, data science team augmentation ensures agility, high-quality outcomes, and future readiness by blending internal strengths with external expertise.

Cloud-based analytics solutions empower organizations with agile, real-time insights by centralizing data access, analysis, and sharing in the cloud. These platforms enhance decision-making speed, scalability, and cost efficiency, eliminating the need for heavy on-premises infrastructure. Key benefits include instant data integration, advanced security, seamless collaboration, and powerful data visualization. Businesses can process massive data sets, spot trends, and respond immediately to opportunities or challenges, staying competitive in fast-changing markets. Features to seek in a cloud analytics platform include real-time dashboards, predictive analytics, robust security, multi-source integration, and user-friendly collaboration tools. Popular solutions like Microsoft Power BI, TIBCO, and Google Cloud offer flexible options for different needs. Cloud analytics supports a variety of industries—retail, finance, healthcare, manufacturing, and marketing—by enabling use cases such as sales optimization, fraud detection, patient monitoring, supply chain management, and campaign analysis. Implementation is straightforward with careful planning, phased rollout, and staff training. The cloud approach also improves disaster recovery and supports remote work, though businesses should consider connectivity, vendor lock-in, and compliance. Overall, cloud-based analytics solutions drive smarter, faster, and more secure business decisions for agile organizations.

A robust data governance strategy and roadmap is vital for organizations managing personal, financial, or operational data to ensure regulatory compliance and build stakeholder trust. Key components include a clear operating model aligned with business goals, defined roles using frameworks like RACI/DACI, adequate skills and funding, structured process phases, modular and scalable technology, centralized metadata management, and automation powered by AI. Engaging stakeholders and enhancing data literacy across all levels further strengthens the governance framework. To comply with regulations like GDPR, HIPAA, or CCPA, organizations should prioritize risk-based projects, assign clear ownership, leverage modern governance platforms, centralize policies, and regularly measure and update compliance efforts. Building trust involves transparent, reliable data practices, executive sponsorship, consistent communication, and cross-functional collaboration. Implementing a successful data governance roadmap requires assessing current processes, defining goals, building a dedicated team, mapping workflows, selecting appropriate technology, ongoing training, and continuous improvement. Leading tools such as Collibra, Informatica, Alation, Microsoft Purview, and cloud platforms like AWS and Azure support these efforts with features like metadata management and policy automation. Even small organizations benefit from data governance, which enables secure, compliant, and data-driven decision-making while reducing risks and fostering business growth.

A data mining expert salary is highly competitive, varying widely based on experience, industry, job role, skills, and location. Entry-level salaries typically start at $70,000, rising to $150,000 or more for seasoned professionals, especially those with advanced skills or leadership roles. Key responsibilities include analyzing large datasets, applying statistical models, and providing insights that drive business decisions across sectors like technology, finance, healthcare, and retail. Tech and finance offer the highest compensation—often $100,000 to $180,000+—while academia and government roles pay less but may offer other benefits. Geographic location significantly impacts pay, with major tech hubs in North America, Europe, and Australia offering the highest salaries. Desired skills include Python, R, machine learning frameworks, SQL, cloud platforms, and data visualization tools; continuous learning and specialization in areas like real-time analytics or deep learning further boost earning potential. Advanced degrees can lead to higher salaries, but relevant experience and skills are crucial. Data mining expertise is transferable across industries, and staying competitive requires ongoing education and professional networking. Overall, data mining experts enjoy strong demand, excellent earning prospects, and diverse career opportunities worldwide.

Enroll in a top-rated Data Science Training Course in Noida to gain practical, industry-relevant skills and boost your career in analytics and technology. These courses, ideal for students, professionals, and career switchers, offer an industry-aligned curriculum covering Python, Java, statistics, machine learning, data visualization, databases, AI, cloud deployment, and web basics. You’ll work on real-world projects, build a strong portfolio, and receive official certification, enhancing your job prospects in IT, finance, healthcare, and more. Flexible learning formats, job placement support, mentoring, and hands-on experience set Noida’s programs apart. Courses typically last 3-6 months and include live projects, career counseling, and resume building. No advanced coding skills are needed to start—courses guide you step by step. After completion, you can apply for roles like data analyst, junior data scientist, or ML engineer, and benefit from robust employer networks. Start your data-driven career today by researching local institutes, comparing curriculums, and enrolling in a course that fits your schedule and goals. Noida’s thriving tech scene and expert-led training make it a smart choice for aspiring data professionals.

Time series forecasting is a powerful technique that leverages historical, time-stamped data to predict future trends, making it invaluable for businesses seeking data-driven planning and improved decision-making. It is particularly effective when there is consistent historical data, clear patterns or seasonality, and a need for ongoing, repeatable forecasts—such as predicting sales, resource needs, or customer demand. Organizations of all sizes, from retailers and manufacturers to energy providers and small businesses, can benefit by optimizing inventory, budgeting confidently, and adapting to changing market conditions. Modern forecasting tools, including machine learning models like ARIMA and Prophet, enhance accuracy and automate insights, enabling rapid response and continuous improvement. However, success depends on data quality, sufficient historical records, and choosing models that balance complexity with usability. While time series forecasting cannot predict unforeseen events, it significantly reduces risk by supporting better resource allocation and operational planning. To implement, identify key metrics, collect and clean data, analyze patterns, select and train a suitable model, and integrate forecasts into regular planning cycles. Ultimately, time series forecasting empowers organizations to anticipate trends, minimize losses, and align strategies with business goals.

Choosing the right machine solution provider for automation and AI is crucial for businesses seeking increased efficiency, cost reduction, and competitive advantage. A machine solution provider delivers customized AI and automation solutions, such as custom software development, robotic process automation (RPA), data analytics, system integration, and ongoing support. Leading providers tailor their offerings to specific industry needs—whether in retail, healthcare, finance, or manufacturing—ensuring scalable and secure solutions that integrate seamlessly with existing infrastructure. Key factors to consider when selecting a provider include proven industry experience, ability to customize solutions, skilled expert teams, integration capabilities, transparent pricing, and robust security and compliance standards. Top providers utilize advanced technologies like generative AI, computer vision, NLP, cloud integration, and predictive analytics to enable end-to-end digital transformation. A strong, long-term partnership with a machine solution provider ensures continuous innovation, regular updates, training, and alignment with evolving business objectives. This approach helps businesses of all sizes—from startups to enterprises—maximize ROI and stay ahead in the rapidly changing landscape of automation and AI.

Data processing services empower businesses of all sizes to transform raw data into actionable insights, automate repetitive tasks, and make data-driven decisions. Leading companies like Amazon, Netflix, Uber Eats, McDonald’s, Starbucks, and Accuweather leverage these services for dynamic pricing, personalized recommendations, optimized deliveries, targeted marketing, and precise forecasting. A typical data processing workflow includes data collection, cleaning, transformation, storage, analysis, and visualization, allowing organizations to streamline operations and respond swiftly to market changes. Key features to consider when selecting data processing solutions include scalability, real-time processing, support for diverse data types, robust data quality assurance, actionable insights, and seamless integration with existing systems. Tools such as Apache Spark, Hadoop, Google BigQuery, Azure Data Factory, Tableau, and Power BI are popular choices for processing and visualizing data. Data processing services not only automate routine tasks and minimize errors but also uncover new business opportunities and support regulatory compliance. Even small businesses can benefit through affordable cloud-based platforms and user-friendly interfaces, making advanced analytics accessible without extensive technical expertise.

Automated reporting solutions revolutionize business data management by streamlining data collection, analysis, and distribution. By directly integrating with key data sources such as Google Analytics, CRMs, and social media through secure APIs, these tools drastically reduce manual workload, minimize human error by up to 90%, and cut report generation time by up to 50%. Key features include API-based integrations, customizable dashboards, advanced data validation, automated scheduling, collaboration tools, and robust security controls. Companies adopting automated reporting often realize significant cost savings—up to 30%—and improved ROI, especially in marketing and IT operations. Popular solutions range from business intelligence platforms (like Power BI and Tableau) to marketing analytics, financial reporting tools, and custom in-house systems. While setup and integration require initial investment and ongoing updates, the benefits include accelerated insights, centralized information, enhanced collaboration, and scalable workflows. To implement automated reporting, businesses should identify vital data sources, define KPIs, select appropriate platforms, and pilot solutions within one department before scaling. Automated reporting supports, rather than replaces, data analysts, enabling better strategic decisions. Secure and suitable for businesses of all sizes, these solutions are essential for organizations seeking efficiency, accuracy, and real-time data-driven decision-making.

Data compliance and security services are crucial for safeguarding your business in today’s digital landscape. These solutions integrate advanced technologies—such as data encryption, multi-factor authentication, automated audits, and vulnerability assessments—with regulatory practices to protect sensitive data and ensure adherence to laws like GDPR, HIPAA, and PDPA. Effective services go beyond technology, emphasizing ethical data handling, staff training, secure data disposal, and ongoing compliance reviews. They help prevent data breaches, avoid costly fines, and build trust with customers by demonstrating your commitment to privacy. When selecting a provider, prioritize comprehensive coverage, real-time monitoring, customizable controls, regulatory support, and easy integration with existing systems. Seamless implementation involves risk assessments, clear policies, robust controls, regular training, and incident response planning. Proactively adopting these services reduces risks, enhances operational efficiency, and strengthens your competitive edge—benefiting businesses of all sizes and industries.

Leading natural language processing (NLP) services for conversational AI—such as Google Dialogflow, IBM Watsonx Assistant, Amazon Lex, Rasa, Kore.ai, Zendesk, Moveworks, ServiceNow, Aisera, Haptik.ai, Leena.ai, Avaamo.ai, Verloop.io, Rezolve.ai, and Conversational Cloud—empower organizations to build intelligent chatbots and virtual assistants. These platforms leverage advanced NLP, machine learning, and sometimes generative AI to understand user intent, manage context, automate workflows, and deliver natural, context-aware conversations across multiple channels (web, mobile, voice, messaging apps). Key features to consider when choosing an English-language conversational AI platform include robust NLP, integration with business tools (e.g., Microsoft Teams, Salesforce), workflow automation, security, analytics, scalability, and industry-specific solutions. Businesses in IT, HR, healthcare, education, and banking use these services to automate support, reduce costs, enable 24/7 self-service, and improve customer and employee satisfaction. Modern platforms offer low-code/no-code interfaces for easy deployment, support both text and voice interactions, and continually improve through AI-driven learning. Adopting conversational AI streamlines operations, enhances user experiences, and provides actionable insights for ongoing improvement.

Data quality assessment forms are essential tools for ensuring your organization's data is accurate, reliable, and fit for decision-making. These structured forms or templates help you evaluate key dimensions such as accuracy, completeness, consistency, validity, timeliness, uniqueness, and data integrity. Effective forms include clearly defined quality checks, issue management fields, quality metrics tracking, automation capabilities, and compliance metadata. To choose the right form, align it with your critical datasets and business objectives, selecting relevant dimensions and integrating it into your workflow. Best practices include aligning with your data governance strategy, using standard or customized dimensions, embedding automation, supporting issue tracking, utilizing metadata, and providing team training. Automated tools like Great Expectations, Deequ, and lakeFS can enhance assessments by streamlining checks and maintaining version control, leading to more trustworthy analytics and better business decisions. Ready-to-use templates range from basic spreadsheets to advanced enterprise platforms, allowing organizations to start simple and scale as needed. Begin by selecting a key dataset, applying a basic template, and refining your process over time, ensuring data quality becomes a routine part of your business operations.

Create your own machine learning model in minutes with this step-by-step guide, perfect for beginners and professionals alike. Start by collecting relevant data, then preprocess it to ensure accuracy. Choose the right model type—regression for predictions or classification for categories—and split your data into training and testing sets. Train your model using popular tools like Python’s scikit-learn, and evaluate performance using metrics such as accuracy, precision, or mean squared error. Optimize your model with techniques like cross-validation, then deploy it using platforms like Docker and Kubernetes for scalable, real-world use. Leverage user-friendly tools such as Python and Jupyter Notebooks, and simplify development with AutoML platforms like Google Cloud AutoML and Microsoft Azure Machine Learning. Beginners should focus on learning key concepts—features, labels, and data splits—and start with simple models. Avoid common mistakes like ignoring data quality or overfitting, and always test on unseen data. Deployment is streamlined with containerization and cloud services, making your model accessible via APIs. With these best practices and resources, anyone can efficiently build, evaluate, and deploy machine learning models, accelerating innovation for both individuals and organizations.

Choosing a reliable business intelligence (BI) service provider is crucial for transforming data into actionable business insights. Trusted BI providers offer secure, scalable, and user-friendly solutions tailored to your business objectives. Key qualities include transparent alignment with business goals, advanced analytics, robust data integration, strict security measures, intuitive interfaces, responsive support, and clear pricing. Top BI providers—such as Microsoft Power BI, Tableau, Qlik Sense, Google Looker, Sisense, and Zoho Analytics—stand out for their strong security, integration capabilities, and scalability, catering to both small businesses and large enterprises. For small businesses, affordable, no-code platforms like Zoho Analytics and Sisense enable quick setup, mobile access, and seamless integration with popular business tools. Reliable BI providers deliver benefits such as improved decision-making, increased efficiency, enhanced data security, scalability, cost savings, and compliance support. When selecting a provider, define your objectives, assess technical and support needs, ensure scalability, and compare real-world reviews. Look for features like real-time dashboards, customizable metrics, drag-and-drop visualization, and strong data governance. Ultimately, the right BI partner empowers your organization with secure, actionable insights for strategic growth.

Essential Data Support Engineer Interview Questions and Answers Preparing for a data support engineer interview requires mastering both technical skills and effective communication. Common interview questions cover daily responsibilities, expertise in SQL and Python, differences between star and snowflake schemas, OLAP vs. OLTP databases, handling missing or corrupted data, and real-world project experiences. Candidates should demonstrate proficiency with ETL tools (like Apache Airflow, Talend), data modeling, troubleshooting, and data quality assurance. Interviewers also assess behavioral competencies, such as teamwork, problem-solving, stakeholder management, and adaptability under pressure. Effective preparation involves reviewing core data engineering concepts, practicing technical questions with real examples, and being ready to explain past projects and compliance knowledge (e.g., GDPR). Key tools include cloud data warehouses (Redshift, BigQuery, Snowflake), big data platforms (Hadoop, Spark), relational databases, and monitoring systems (Prometheus, Datadog). To excel, showcase your ability to design, monitor, and optimize data pipelines, ensure data quality, and communicate technical solutions clearly. Mock interviews and continuous feedback are recommended for refining your responses. Ultimately, balance technical expertise with strong interpersonal skills to stand out as a top data support engineer candidate.

Professional data labeling software is essential for developing high-quality AI systems, providing the accuracy and consistency required for effective model training. These tools enable teams to efficiently label text, images, audio, and video, ensuring data meets stringent quality standards and minimizing errors or bias. Key features to seek include multi-format support, customizable annotation guidelines, complex tagging structures, multi-annotator workflows, robust quality control, workflow management, automation integration, and continuous feedback. Such capabilities streamline annotation, enhance scalability, and support both manual and automated labeling. Top brands like Labelbox, Scale AI, SuperAnnotate, Snorkel Flow, and Amazon SageMaker Ground Truth offer flexible workflows and integrations for efficient data management. Best practices for software selection include evaluating data type support, workflow efficiency, scalability, integration options, and quality control mechanisms. Quality assurance is strengthened by involving multiple annotators and leveraging automated pre-labeling with human review. This human-in-the-loop approach ensures reliable, unbiased datasets, critical in high-stakes industries like healthcare and autonomous vehicles. Ultimately, professional data labeling software underpins AI success by delivering precise, well-annotated datasets, driving superior model performance and trustworthy results.

AI powered business intelligence (BI) revolutionizes decision-making by delivering real-time, actionable insights as data is generated. Unlike traditional BI, which focuses on historical data and delayed reporting, AI-driven platforms enable organizations to proactively identify trends, risks, and opportunities, supporting faster, smarter choices. Key features include immediate data processing, predictive analytics, automation of tasks like data cleaning and reporting, personalized dashboards, and instant alerts. These capabilities empower teams across retail, logistics, healthcare, finance, and manufacturing to optimize operations, reduce costs, and enhance customer experiences. Implementing AI powered BI involves assessing current data systems, integrating data sources, automating analytics, customizing dashboards, and training users. Challenges such as data quality and change management can be addressed through automation and staff training. AI powered BI is scalable and accessible for businesses of all sizes, breaking down data silos and fostering organization-wide agility. By leveraging AI for business intelligence, companies gain a significant competitive edge, achieving faster, data-driven decisions and improved business growth.

India is a global leader in Natural Language Processing (NLP) and AI innovation, with top companies such as Ksolves, Tata Elxsi, Fractal Analytics, Haptik, Arya.ai, Mad Street Den, Locus, SigTuple, and Uniphore driving advancements across diverse sectors. These firms excel in developing generative AI, large language models, conversational chatbots, fraud detection, and predictive analytics—delivering robust solutions for finance, healthcare, retail, logistics, and automotive industries. Leveraging India’s strong English language expertise and vast talent pool, these companies offer scalable, cost-effective, and culturally attuned NLP products, powering global brands and streamlining operations worldwide. Their technological stack includes open-source frameworks like TensorFlow and PyTorch, cloud platforms such as AWS and Azure, and custom-trained language models for English and regional languages. Indian NLP providers are recognized for their strict data security standards and ability to deliver multilingual and business-specific AI solutions. Noteworthy innovations include AI-powered diagnostics, multilingual chatbots, predictive analytics for retail, and advanced conversational platforms. This ongoing commitment to excellence, technical skill, and client-focused delivery ensures Indian NLP companies remain at the forefront of global AI and language technology.

Hiring data scientists for online jobs is an effective way for businesses to fill talent gaps in analytics, machine learning, and big data, especially when local candidates are scarce. By leveraging remote hiring, companies gain access to a global pool of skilled professionals, enabling faster, more flexible, and cost-efficient recruitment. Top platforms for finding remote data science talent include Upwork, Fiverr, Kaggle Jobs, DataJobs, LinkedIn, and specialized tech boards like Dice and AngelList. When hiring remotely, assess candidates through detailed resumes, online portfolios (e.g., GitHub, Kaggle), video interviews, and technical challenges to ensure technical and communication skills. Remote data scientists offer benefits such as scalability, lower overhead, and increased work-life balance, making them an attractive option for fast-growing or project-based needs. To ensure hiring success, clearly define job requirements, streamline the screening process, provide structured onboarding, and foster virtual collaboration. Essential skills for remote data scientists include expertise in Python or R, machine learning, data visualization, independent work, and strong online communication. By adopting these strategies, organizations can quickly and confidently hire data scientists online to drive data-driven growth and stay competitive.

The data strategy roadmap for the federal public service is a comprehensive plan designed to enhance data management, improve decision-making, and foster public trust across government departments. Central to the roadmap are four mission areas: Data by Design, Data for Decision-making, Enabling Data-driven Services, and Empowering the Public Service. The strategy emphasizes ethical data use, fairness, inclusion, and Indigenous data sovereignty, supported by strong governance and clear accountabilities, such as the role of Chief Data Officer. Key actions include setting data standards, supporting open data initiatives, building workforce capabilities through ongoing training, and implementing robust monitoring with performance indicators. These measures enable efficient service delivery, evidence-based policymaking, greater transparency, and improved collaboration. The roadmap also prioritizes privacy, security, and ethical practices, ensuring that sensitive data is managed responsibly. Successful implementation examples include improved pandemic response and faster project delivery through shared tools and open data. Challenges such as siloed data, skills gaps, and balancing openness with privacy are addressed through common standards, training, and privacy-by-design approaches. Ultimately, the data strategy roadmap creates a trustworthy, responsive government, empowering both public servants and citizens.

Data analytics empowers small companies to drive smarter growth by transforming everyday data into actionable insights. By analyzing information across marketing, sales, finance, and customer behavior, small businesses can identify new opportunities, optimize resources, and make informed decisions. Easy-to-use, cost-effective analytics tools like Google Analytics, Tableau, HubSpot, Zoho, and QuickBooks enable even non-technical teams to track key metrics, visualize trends, and improve performance. Starting with clear goals and relevant KPIs, small businesses can collect, clean, and analyze data, then act on findings to boost efficiency and customer satisfaction. The main benefits include improved decision-making, cost savings, targeted marketing, and agility in response to market changes. However, challenges such as limited budgets, lack of expertise, and data integration must be addressed, often with affordable tools or expert support. Consistent use of analytics fosters a data-driven culture, leading to collaboration, innovation, and sustained growth. For more guidance, resources like data science workflow guides and strategy alignment materials can help small businesses maximize the value of analytics.

The demand for industry-specific data science jobs is soaring across diverse sectors such as technology, finance, healthcare, retail, automotive, and media. Companies like Amazon, JPMorgan Chase, Tesla, and Walmart seek data professionals for roles involving AI, analytics, and automation. To stand out, candidates need strong programming skills (Python, R, SQL), expertise in machine learning and cloud platforms, and relevant domain knowledge—such as regulatory compliance in finance or EHR familiarity in healthcare. Building a public portfolio, earning certifications, and tailoring applications to specific industries significantly boost job prospects. Job seekers should utilize company career pages, LinkedIn, niche job boards, and networking to uncover opportunities, including contract roles via team augmentation programs. Effective communication, real-world problem-solving, and ongoing learning are essential, as employers value both technical proficiency and business insight. Adapting to sector-specific challenges, such as data privacy in healthcare or automated analytics in retail, further increases employability. Continuous skill development and proactive engagement with industry trends are key to securing and excelling in industry-specific data science roles.

Enroll in a data science training course in Delhi to unlock top career opportunities in India’s thriving tech and business hub. Delhi offers access to leading companies like IBM, Accenture, and Deloitte, renowned institutes (IIT Delhi, IIIT Delhi, Delhi University), and a vibrant network of seminars and meetups. Courses cover essential skills: Python, SQL, data visualization (Tableau, Power BI), machine learning, AI, and big data tools (Spark, Hadoop), with hands-on projects and real-world case studies. No advanced degree is needed—anyone with basic computer skills can join, making it ideal for students, professionals, or career switchers. Benefit from practical training, internships, and placement assistance for roles like Data Analyst, Data Scientist, Machine Learning Engineer, and Business Intelligence Analyst across IT, finance, healthcare, and more. Choose institutes with updated curriculum, experienced instructors, and strong industry ties to maximize learning and job prospects. Delhi’s affordable living, diverse job market, and active professional network make it the perfect place to launch or advance your data science career. Start your journey today for a brighter, data-driven future.

Data science team augmentation is a strategic approach for rapidly scaling companies to access skilled data scientists, engineers, and machine learning experts without the delays of traditional hiring. Unlike outsourcing or consulting, augmentation embeds external specialists directly into internal teams, enabling faster project delivery, smoother communication, and enhanced flexibility. This model is ideal for startups, scaleups, and enterprises facing talent shortages, tight deadlines, or evolving technical needs. Key benefits include immediate access to rare expertise, reduced recruitment cycles, cost efficiency, and minimized risk through real-time collaboration and knowledge sharing. Augmented teams frequently bring advanced skills in leading technologies such as TensorFlow, PyTorch, Apache Spark, Tableau, and major cloud ML platforms. Successful augmentation depends on clearly defined goals, open communication, and the right cultural fit. Best practices include selecting reputable providers with stringent vetting processes, prioritizing data privacy, and integrating external professionals seamlessly into daily workflows. Leading brands in fintech, healthcare, and ecommerce have leveraged data science team augmentation to accelerate innovation and maintain a competitive edge. For organizations aiming to scale quickly and efficiently, partnering with trusted augmentation providers offers a proven path to boost capabilities, modernize analytics, and drive faster results.

Text analysis services leverage advanced natural language processing (NLP) and machine learning to extract actionable insights from large volumes of unstructured text. These platforms enable organizations to quickly analyze customer feedback, social media, emails, business reports, and more—identifying sentiment, uncovering key themes, recognizing entities, summarizing content, and extracting keywords. The typical workflow includes data ingestion, preprocessing, analysis, extraction, and reporting, making it easy for even non-technical users to interpret results through user-friendly dashboards. Industries such as retail, e-commerce, banking, healthcare, legal, and media benefit significantly by improving products, detecting fraud, summarizing patient notes, and monitoring public opinion. Popular tools like MonkeyLearn, Google Cloud Natural Language, IBM Watson, and Amazon Comprehend offer scalable, secure, and accurate solutions, though challenges like messy data, language limitations, and nuanced context require careful data preparation and some human oversight. Choosing the right text analysis service involves evaluating accuracy, ease of use, security, integration, and scalability. By automating text analysis, organizations save time, reduce manual bias, and gain deeper insights, enabling more informed decisions and a competitive edge in a data-driven world.

Time series forecasting software enables businesses and organizations to predict future trends by analyzing historical, time-based data. These tools utilize advanced statistical models, machine learning, and artificial intelligence to deliver accurate forecasts for applications like inventory management, sales prediction, budgeting, and sensor monitoring. Key features include automated data import, model selection (such as ARIMA, exponential smoothing, or neural networks), data visualization, and integration with various data sources. Leading solutions include Microsoft Azure Machine Learning, Amazon Forecast, Google Cloud AI Forecasting, Tableau, IBM SPSS, and open-source Python libraries like Prophet and TensorFlow. The software is vital for reducing costs, mitigating risks, optimizing resources, and enhancing customer satisfaction across industries including retail, finance, healthcare, energy, and transportation. Choosing the best tool depends on ease of use, flexibility, data integration, visualization, accuracy, support, and budget. While these solutions automate forecasting and improve accuracy, challenges remain with data quality, sudden market shifts, and model selection. Many platforms are beginner-friendly, support real-time data, and offer scenario analysis. Combining reliable software with human expertise delivers the most robust and actionable predictions.

Natural Language Processing (NLP) companies leverage advanced AI algorithms to transform vast amounts of text and speech into actionable insights for organizations. By teaching machines to interpret and respond to human language, NLP firms enable efficient analysis of emails, chats, documents, and audio data across industries such as healthcare, finance, retail, legal, and technology. Their solutions automate tasks like sentiment analysis, entity recognition, and intent detection, delivering real-time, accurate, and scalable results that help businesses improve decision-making, customer experience, and operational efficiency. Leading NLP companies ensure data privacy and ethical usage through encryption, anonymization, and compliance with regulations like GDPR. They also offer customizable solutions to cater to diverse languages, dialects, and industry needs. Popular tools include open-source platforms like NLTK and spaCy, alongside commercial enterprise platforms. As AI-driven chatbots, virtual assistants, and voice technologies evolve, NLP’s role in sectors such as education, fraud detection, and media monitoring continues to expand. Even small businesses can benefit from scalable NLP services to automate communication and gain valuable insights, making NLP a strategic asset for organizations of all sizes.

Cloud-based analytics services empower businesses to make real-time, data-driven decisions by collecting, processing, and analyzing information through remote servers. Unlike traditional on-premises solutions, these platforms offer instant insights without heavy infrastructure investment, leveraging AI and machine learning for rapid analysis. Real-time analytics enables organizations to quickly respond to market shifts, detect operational changes, and personalize customer experiences, delivering competitive advantages across industries such as retail, finance, healthcare, and manufacturing. Key features to look for include live data stream integration, real-time dashboards and alerts, AI/ML support, scalability, automation, security, and user-friendly interfaces. Benefits include faster decision-making, operational efficiency, cost savings, improved logistics, and enhanced customer satisfaction. Modern solutions like Oracle HeatWave and AWS cloud analytics streamline data integration and visualization, making advanced analytics accessible even for non-technical users. Getting started involves defining business goals, identifying data sources, selecting a suitable cloud analytics provider, and configuring dashboards and alerts for continuous monitoring and automation. Secure, scalable, and cost-effective, cloud-based analytics services help businesses of all sizes anticipate trends and stay ahead in the fast-paced digital landscape.

NLP solution providers are essential for businesses seeking advanced chatbots and actionable customer insights. When choosing a provider, prioritize features such as robust security (GDPR, ISO 27001, HIPAA compliance), multilingual support, easy integration with CRM and support platforms, user-friendly interfaces, customization, scalability, advanced analytics, and transparent pricing. Leading solutions like Google Dialogflow, Microsoft Azure Language Service, Amazon Lex, Rasa, and IBM Watson Assistant offer powerful tools, while regional startups provide tailored options for local markets. Top trends for 2024 include omni-channel and voice-activated chatbots, enhanced privacy, instant deployment, and richer analytics, enabling businesses to meet global customer needs and extract deep insights. NLP providers boost chatbot performance through machine translation, context recognition, automated data collection, and ongoing optimization. To get started, assess business needs, shortlist and demo providers, check integrations, review contracts, pilot deployments, and scale as needed. Industries like retail, banking, healthcare, and travel benefit most from NLP chatbots, which streamline support and gather insights across languages. Effective integration, security certifications, and analytics dashboards are key to measuring ROI and ensuring data protection.

Text analytics solutions empower organizations to transform unstructured text—such as customer feedback, social media, emails, and surveys—into actionable insights using AI and Natural Language Processing (NLP). These tools efficiently process vast volumes of written information, revealing patterns, trends, and customer sentiment while reducing manual labor and bias. Key features include robust data preparation, comprehensive NLP techniques (like sentiment analysis and topic modeling), advanced visualizations, AI automation, customization options, and strong governance. Industries such as business intelligence, healthcare, finance, government, e-commerce, and technology leverage text analytics to drive informed, data-driven decisions. Benefits include rapid data processing, consistent and objective analysis, discovery of hidden patterns, scalability, cost savings, and actionable recommendations. Leading solutions include IBM Watson, Microsoft Azure Text Analytics, Google Cloud Natural Language, and open-source tools like spaCy and NLTK. Best practices involve setting clear goals, ensuring data quality, choosing user-friendly platforms, regularly refining models, and safeguarding data privacy. Text analytics solutions are accessible to businesses of all sizes, offering high accuracy and significant value across various written data sources, helping organizations stay competitive and responsive in a data-driven world.

Supply chain analytics empower companies to cut costs and boost efficiency by leveraging data-driven strategies across procurement, inventory, and logistics. Leading organizations like Deere & Company, Starbucks, and Intel have achieved significant savings by optimizing logistics networks, implementing RFID for real-time inventory tracking, and improving delivery reliability with better third-party logistics. Tools such as Transportation Management Systems (TMS), cloud-based analytics, and advanced forecasting models enable firms to streamline operations, reduce excess inventory, and enhance supplier performance. Key analytics techniques—diagnostic, predictive, prescriptive, and descriptive—help identify inefficiencies, forecast demand, and recommend actionable improvements. Benefits include lower transportation and holding costs, improved market responsiveness, and greater supply chain visibility. Businesses can start by mapping current processes, gathering key data, and adopting scalable analytics tools, with many affordable SaaS options available. Continuous monitoring and iterative improvements ensure ongoing cost reduction and adaptability. Supply chain analytics are especially impactful in sectors like consumer goods, electronics, and food & beverage, setting industry benchmarks for efficiency and resilience.

Business intelligence (BI) services empower organizations to collect, analyze, and visualize data for faster, more informed decision-making. Leveraging BI tools like Power BI, Tableau, and Qlik, companies can turn raw data into actionable insights, automate reporting, and monitor key metrics in real time. Core benefits include accelerated reporting, deeper analytics, improved customer understanding, better inventory and risk management, and support for data-driven cultures. Successful BI implementation involves defining business objectives, assessing data quality, selecting suitable tools, integrating diverse data sources, creating intuitive dashboards, training staff, and continuously refining strategies. Essential metrics to track with BI include operational KPIs, reporting speed, inventory turnover, financial performance, data quality, compliance, and real-time operational data. Adoption challenges—such as data quality issues, user resistance, and legacy system integration—can be overcome through early data cleansing, inclusive planning, practical training, and agile improvement. BI services are vital for organizations of all sizes, enabling responsive, evidence-based strategies and sustained competitive advantage.

A time series service is essential for real-time streaming analytics, enabling organizations to process and analyze fast-moving, time-stamped data from sources like IoT sensors, financial transactions, and application logs. Unlike traditional batch systems, time series services capture data in chronological order, supporting instant insights, anomaly detection, and predictive analytics across industries such as finance, manufacturing, energy, healthcare, and logistics. Leading tools like Apache Kafka, Amazon Kinesis, and Apache Pulsar, along with frameworks like Flink and Spark Streaming, offer scalable, distributed solutions for handling high-velocity data streams. Key features of effective time series services include continuous data ingestion, strict ordering, automatic scaling, fault tolerance, end-to-end security, and robust monitoring. Best practices for integration involve aligning tools to workloads, optimizing partitioning, replicating data for resilience, encrypting and controlling access, and monitoring system performance. Time series services empower businesses to make data-driven decisions in real time, reduce downtime, improve operational efficiency, and gain a competitive edge. Their critical role in streaming analytics makes them indispensable for modern data-driven enterprises.

Cloud-based analytics empowers businesses with faster, more insightful reporting by leveraging scalable, secure, internet-accessible platforms. Unlike traditional on-premise tools, cloud analytics enables real-time data processing, instant access to key metrics, and seamless collaboration from any location or device. Leading providers such as Microsoft Azure, Google Cloud, and Amazon Web Services offer robust solutions that eliminate manual data exports and slow queries, allowing teams to make data-driven decisions quickly. Key features to prioritize include real-time data processing, easy integration with existing systems, customizable dashboards, and strong security measures like encryption and multi-factor authentication. Cloud analytics also supports business growth by enabling rapid innovation, accurate performance tracking, and agile responses to market changes. Popular tools such as Tableau Online, Google Data Studio, and Microsoft Power BI are known for user-friendly interfaces and powerful integrations. Transitioning to the cloud involves assessing current processes, selecting the right platform, ensuring data security, and training teams for optimal adoption. While migration may pose challenges, clear planning and ongoing communication maximize returns. Ultimately, cloud-based analytics offers businesses of all sizes a competitive edge through faster reporting, improved accuracy, cost efficiency, and secure, collaborative access to data insights.

Data science consulting jobs offer dynamic opportunities for professionals skilled in data analysis, machine learning, and business strategy. These roles involve advising companies across industries—such as finance, healthcare, retail, manufacturing, and marketing—on leveraging data to solve business problems and enhance decision-making. Typical responsibilities include client consultations, data collection and cleaning, exploratory data analysis, predictive modeling, and presenting actionable insights through clear reports and visualizations. To qualify, candidates usually need a bachelor’s or master’s degree in statistics, mathematics, computer science, or a related field, along with expertise in programming languages like Python or R, statistical analysis, machine learning, data visualization, and strong communication skills. Industry-specific experience or certifications in analytics and cloud platforms (AWS, Azure) can boost employability. Job seekers can find opportunities on company websites, job boards, professional networks, and freelance platforms. Entry-level applicants should highlight relevant projects, industry knowledge, and communication abilities. While data science consulting offers variety, learning, and career growth, it can involve tight deadlines and shifting client demands. Building a solid technical foundation, gaining hands-on experience, and networking are key steps for launching a successful consulting career in data science.

A data-driven creative agency expertly blends artistic vision with advanced analytics to deliver marketing campaigns that are both inspiring and measurable. By leveraging audience insights from digital footprints, sentiment analysis, and real-time feedback, these agencies create targeted, emotionally resonant brand experiences that drive real results. Their process includes audience listening, comprehensive data collection, insight generation, collaborative ideation, tailored content creation, and ongoing optimization. This approach benefits industries like healthcare, financial services, and fast-moving consumer goods, where trust, relevance, and rapid adaptation are essential. Key advantages include precise storytelling, measurable returns, strategic creativity, and a strong competitive edge. Businesses of any size can partner with these agencies by clearly defining objectives, reviewing past campaigns, ensuring robust analytics capabilities, and setting transparent performance metrics. Leveraging AI-powered tools and automated reporting, data-driven creative agencies enable brands to lead conversations and maximize marketing ROI through informed, impactful storytelling and continuous campaign improvement.