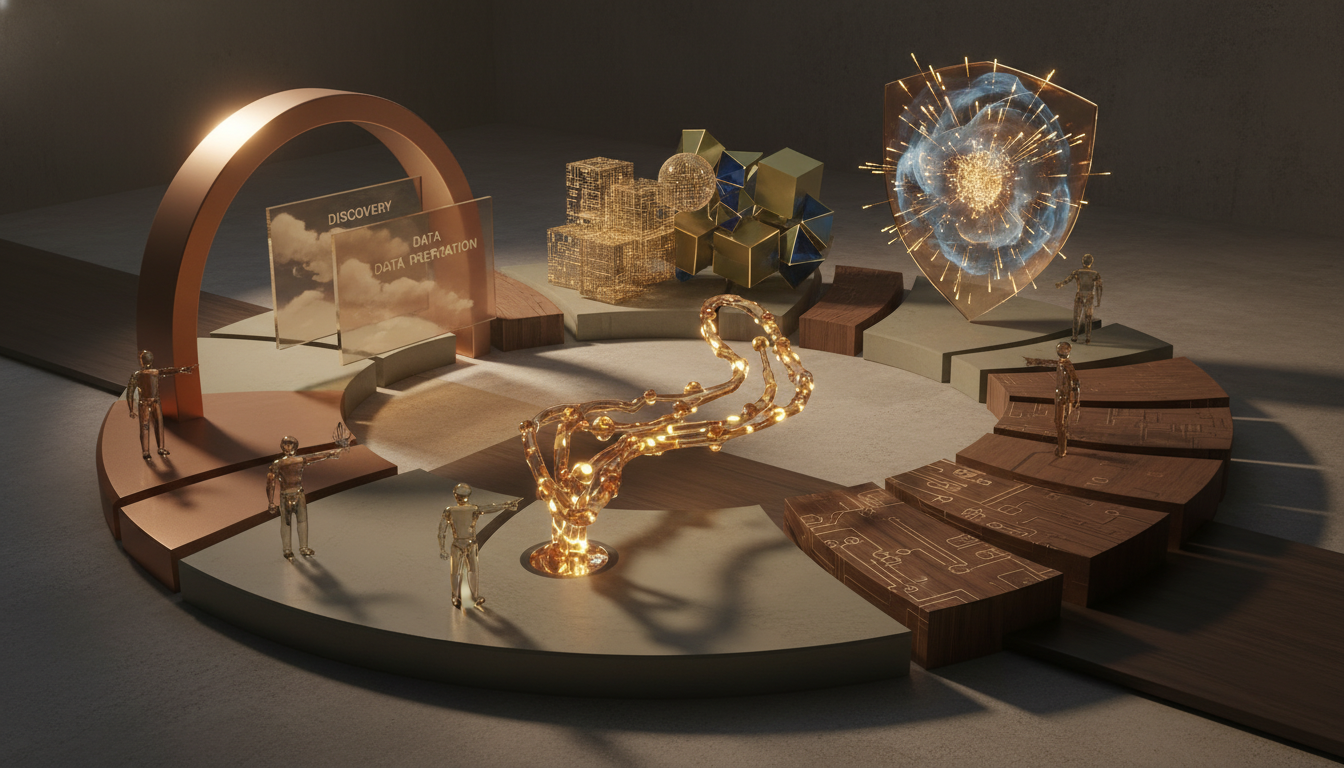

Business AI Implementation Services with a Clear Delivery Framework

Business ai implementation services help companies move from ideas to working tools by using a planned, step by step method. A clear delivery framework matters because it reduces risk, sets goals early, keeps teams aligned, and turns pilot projects into useful business results. Instead of buying software and hoping for the best, businesses use a structured approach to choose use cases, prepare data, test safely, train staff, and measure value over time.

Many firms want artificial intelligence, but they do not need hype. They need outcomes. That may mean faster customer support, better demand forecasts, lower operating costs, or stronger fraud detection. Good delivery starts with the business problem, not the model. It asks what decision needs support, what data is available, what process will change, and who will own the result after launch.

That practical focus is why business ai implementation services are growing across retail, finance, healthcare, logistics, and professional services. Leaders have seen examples from Microsoft, Google Cloud, AWS, Salesforce, and IBM, but success usually depends less on brand choice and more on execution quality. Even the best tools fail if data is weak, teams are unclear, or deployment has no governance.

What makes a clear delivery framework so important?

A delivery framework gives the project shape. It defines phases, roles, checkpoints, and success measures. Without it, teams often jump from excitement to confusion. One group wants automation, another wants insights, and a third worries about privacy. A clear framework brings these needs together in one plan that everyone can follow.

It also protects budget and time. AI projects can grow too fast when scope is vague. For example, a company may start with a chatbot, then add analytics, workflow redesign, and customer data integration without new approvals. A framework sets boundaries. It says what is in the first release, what comes later, and what evidence is required before the next step begins.

Most importantly, it connects technical work to business value. Data scientists may care about accuracy, while operations leaders care about speed, cost, and service levels. A framework translates between them. It turns technical measures into business outcomes that leaders can understand and act on.

What are the core parts of business ai implementation services?

Strong business ai implementation services usually include strategy, delivery, governance, and adoption support. These parts work together. If one is missing, the project may launch but fail to create lasting value.

1. Discovery and use case selection

The first step is to identify problems worth solving. Teams review workflows, pain points, and goals. They rank ideas by value, risk, data readiness, and ease of implementation. This prevents firms from choosing flashy use cases that look good in slides but produce little real benefit.

2. Data assessment and preparation

AI depends on data quality. At this stage, teams check where data lives, how clean it is, who owns it, and whether it can be used legally and ethically. Many delays happen here. Missing fields, duplicate records, and unclear labels can slow progress more than model building.

3. Solution design and model selection

Next, teams decide whether the job needs machine learning, generative AI, rules based automation, or a mix. In many business settings, the simplest tool wins. A demand forecast model, document classifier, or retrieval system may beat a large and expensive custom model.

4. Pilot, testing, and validation

Before a full rollout, the solution is tested in a controlled setting. Teams check accuracy, bias, latency, user feedback, and business fit. This stage answers a practical question: does the tool help people work better in the real process, not just in a demo?

5. Deployment and change management

Implementation does not end when the tool goes live. Users need training, support, and clear guidance. Managers need dashboards. Technical teams need monitoring. Especially for firms exploring Ai in core operations, adoption succeeds when people understand why the tool exists and how it improves daily work.

6. Governance and ongoing improvement

After launch, the service should include risk reviews, model monitoring, retraining plans, and policy controls. Markets change, customer behavior shifts, and data drifts. A useful framework plans for this from the start.

How does a structured approach to business ai implementation work in practice?

A structured approach to business ai implementation often follows a simple sequence. The exact names vary by provider, but the logic stays similar.

- Define the business objective and expected value.

- Map stakeholders, owners, and decision rights.

- Audit data sources, quality, and access rules.

- Select the solution type and technical architecture.

- Build a pilot with clear acceptance criteria.

- Test with users in a controlled environment.

- Deploy into the workflow with training and controls.

- Measure outcomes and improve based on results.

This order matters because each step reduces uncertainty. Companies often want to skip ahead to tools, but doing so can create rework. For instance, if no one agrees on the target process, deployment becomes messy. If legal review happens late, launch may be delayed. If user training is ignored, adoption may stay low even when the model performs well.

A structured process also supports communication. Executives see milestones and budgets. Managers understand process changes. Technical teams know what to build and when. That alignment is one of the main reasons best practices for ai implementation in business focus as much on governance as on technology.

How can businesses measure success in AI implementation?

Measuring success in ai implementation for business starts before development. Teams should define a baseline, choose a few meaningful metrics, and decide how they will be tracked after launch. If there is no baseline, it becomes hard to prove whether the solution improved anything.

Useful measures often fall into four groups:

- Operational metrics: cycle time, error rate, ticket volume, handling time, or downtime.

- Financial metrics: cost savings, revenue lift, margin improvement, or reduced losses.

- User metrics: adoption rate, satisfaction, override rate, or time saved per employee.

- Model metrics: precision, recall, accuracy, drift, and response speed.

Take a customer service case. A generative assistant may reduce average handle time by 20 percent, increase first contact resolution, and shorten staff onboarding. Those are meaningful gains. In a forecasting case, success may mean fewer stockouts, lower excess inventory, and better planning confidence.

Many providers use a phased scorecard. During the pilot, they focus on technical quality and workflow fit. During rollout, they track adoption and stability. After scale up, they measure commercial value. This staged view is practical because not every metric matters equally at every phase.

What challenges do companies face, and how does the framework help?

Challenges in business ai projects and mitigation plans should be discussed early, not after things go wrong. Most companies face a mix of technical, organizational, and regulatory issues.

Common challenges

- Poor or fragmented data across systems.

- Unclear ownership between business and technical teams.

- Scope creep and shifting priorities.

- Weak user adoption due to fear or confusion.

- Privacy, security, or compliance concerns.

- Difficulty moving from pilot to production.

A clear framework addresses these issues by assigning owners, setting checkpoints, and defining approval steps. If data quality is weak, the framework can require remediation before model training. If stakeholders disagree, it can use a steering group to make decisions. If legal risk is high, governance gates can pause the project until controls are in place.

The framework also improves trust. People are more willing to use AI when they know the limits, review process, and escalation path. In regulated sectors, that trust is essential. Healthcare teams may need human review for clinical support tools. Finance teams may need audit trails for risk decisions. The framework makes those controls visible and repeatable.

Which best practices improve results?

Best practices for ai implementation in business are usually simple, even if the technology is complex. Start narrow. Pick one high value process. Use available data first. Involve end users early. Define success in plain business terms. Build governance into the project, not around it later.

It also helps to separate experimentation from production. Teams can test ideas quickly in a sandbox, but live systems need reliability, security, and support. Mature providers often use MLOps or similar operational practices to manage versioning, deployment, and monitoring.

Another best practice is to design for adoption. If a tool adds steps, users may avoid it. If it fits naturally inside Microsoft Teams, Slack, Salesforce, or an existing dashboard, usage is more likely to grow. Good implementation services think about these details from the start.

How should a business choose the right service partner?

Look for a partner that can explain the process clearly, not just the technology. Ask how they select use cases, handle data readiness, manage risk, and measure value. Request examples of pilots that became full deployments. A strong partner should be able to describe what went wrong in past projects and how they fixed it.

You should also ask about team mix. Effective delivery usually needs business analysts, data engineers, solution architects, project managers, and change specialists, not only model builders. Finally, review how the partner plans handover. Your internal team should understand the solution well enough to run and improve it after launch.

FAQ

How long do business AI implementation projects usually take?

Small pilots can take a few weeks, while full implementations may take several months. Timing depends on data quality, integration needs, governance reviews, and how much change the workflow requires.

Do companies need perfect data before starting?

No, but they do need usable data for the first use case. A framework helps teams judge whether data is good enough to begin, what gaps matter most, and what cleanup should happen before scaling.

Is generative AI always the best option?

Not always. Some business problems are better solved with standard machine learning, analytics, or rule based automation. The best choice depends on the task, risk level, cost, and the quality of available data.

What is the main sign that an AI rollout is working?

The clearest sign is measurable business improvement that continues after launch. That includes steady user adoption, reliable performance, and visible gains in speed, quality, revenue, or cost control.